RUN curl $URL/libcudnn7-dev_7.0.5.15-1+cuda9.0_b -so /tmp/libcudnn-dev_b Install libs: L4T, CUDA, cuDNN RUN /tmp/Linux_for_Tegra/apply_binaries.sh -r / & rm -fr /tmp/* Pull the rest of the jetpack libs for cuda/cudnn and install #AUTHOR This is the base container for the Jetson TX2 board with drivers (with cuda) base URL for NVIDIA libsĪRG URL= Update packages, install some useful packages # Pull the rest of the jetpack libs for cuda/cudnn and install RUN /tmp/Linux_for_Tegra/apply_binaries.sh -r / & rm -fr /tmp/* RUN chown root /etc/passwd /etc/sudoers /usr/lib/sudo/sudoers.so /etc/sudoers.d/README RUN apt-get update & apt-get install -y apt-utils bzip2 curl sudo unp & apt-get clean & rm -rf /var/cache/apt # Update packages, install some useful packages #AUTHOR This is the base container for the Jetson TX2 board with drivers (with cuda)

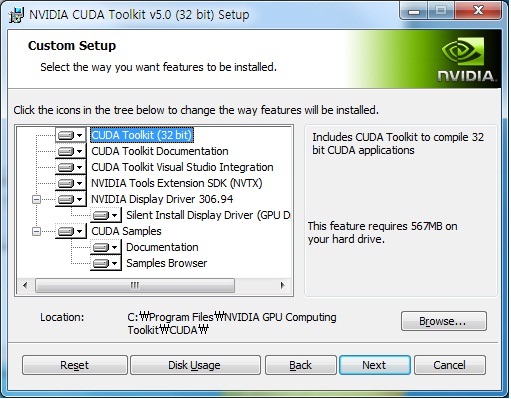

open-horizon/cogwerx-jetson-tx2/blob/master/Dockerfile.cudabase FROM aarch64/ubuntu Just found this Dockerfile which seem to have everything needed to install JetPack incl. You can learn more about CUDA in CUDA zone and download it here. NVIDIA’s CUDA Toolkit includes everything you need to build accelerated GPU applications including GPU acceleration modules, a parser, programming tools, and CUDA runtime. Developers can code in popular languages such as C, C++, Python when using CUDA, and enforce parallelism in the form of a few simple keywords with extensions. The sequential portion of a function runs on the CPU in a GPU-accelerated program for optimized single-threaded performance, while the compute-intensive part, such as PyTorch code, runs parallel at thousands of GPU cores via CUDA. With CUDA, developers can dramatically increase the performance of their computer programs by utilizing GPU resources. PyTorch has native cloud support: It is well recognized for its zero-friction development and fast scaling on key cloud providers.ĬUDA is a general parallel programming and computation paradigm built for NVIDIA graphics processing units ( GPUs).PyTorch has a robust ecosystem: It has an expansive ecosystem of tools and libraries to support applications such as computer vision and NLP.PyTorch support distributed training: The llaborative interface allows for efficient distributed training and performance optimization in research and development.TorchServe speeds up the production process. PyTorch is production-ready: TorchScript smoothly toggles between eager and graph modes.PyTorch has 4 key features according to its homepage. With the release of PyTorch 1.0 the framework now has graph-based execution amd a hybrid front-end that allows for seamless switching of configuration, collective testing, and effective and secure delivery on mobile devices. It allows for easy, flexible experimentation via an autograding framework designed for simple and python-like execution. PyTorch is an open-source Deep Learning framework that is scalable and versatile for deployment testing, sturdy and friendly. The following two sections refer the people interested to PyTorch and CUDA. To check if PyTorch can use both the GPU driver and CUDA 9.0, use the Python code below to decide if CUDA 9.0 is enabled or not. Yours will be similar in some way, except for the numbers. Here we create a tensor, which is randomly initialized. We can verify the PyTorch CUDA 9.0 installation by running a sample Python script to ensure that PyTorch is set up properly.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed